Simon Willison's Weblog

- Author

- Simon Willison

- Public lists

-

Featured

- Fetched

TimeScope: How Long Can Your Video Large Multimodal Model Go?

Announcing Toad - a universal UI for agentic coding in the terminal

1KB JS Numbers Station

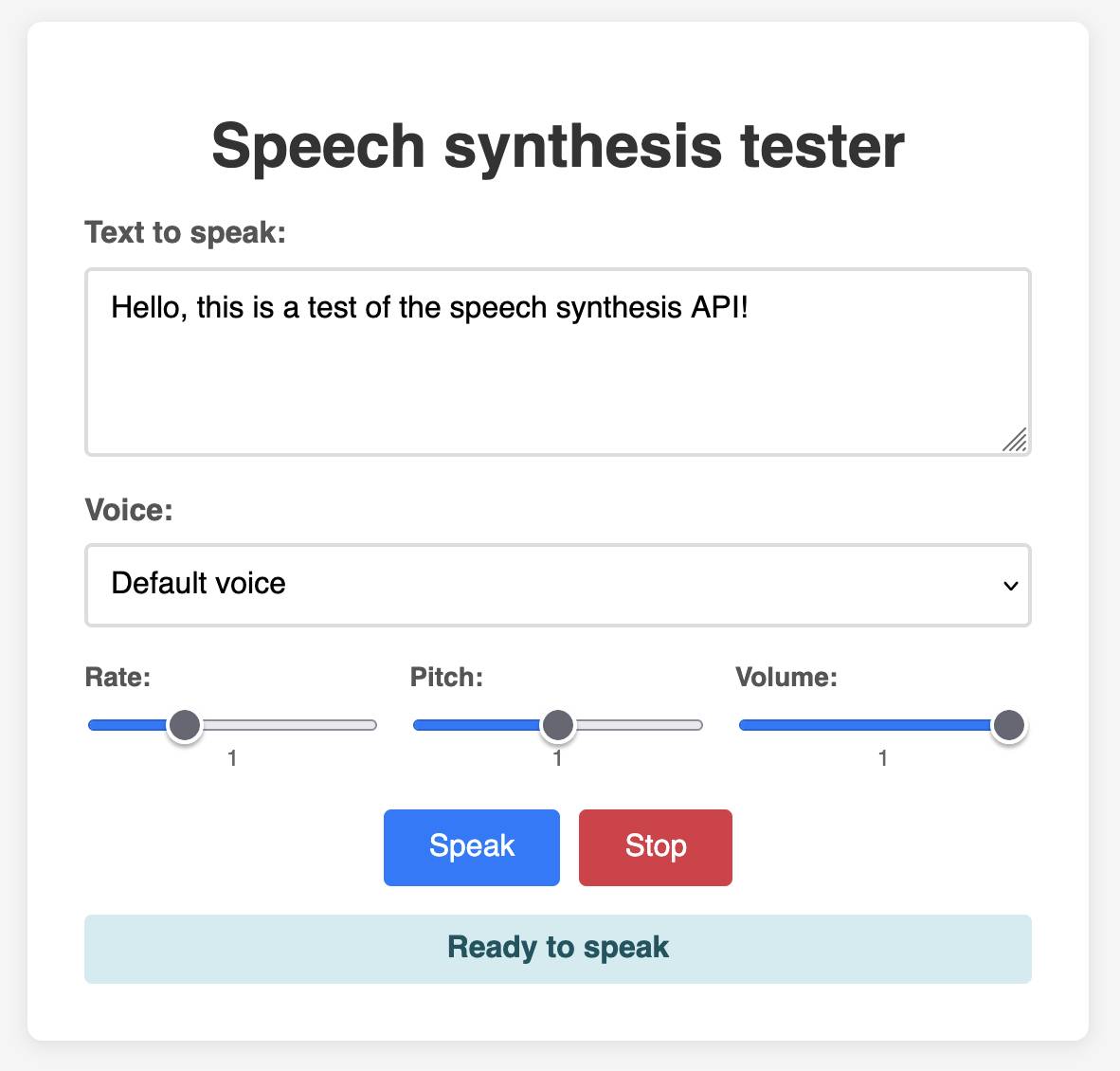

This inspired me to vibe code up this playground interface for that API using Claude:

Tags: javascript, text-to-speech, tools, ai, generative-ai, llms, terence-eden, vibe-coding

Quoting Dave White

like, one day you discover you can talk to dogs. it's fun and interesting so you do it more, learning the intricacies of their language and their deepest customs. you learn other people are surprised by what you can do. you have never quite fit in, but you learn people appreciate your ability and want you around to help them. the dogs appreciate you too, the only biped who really gets it. you assemble for yourself a kind of belonging. then one day you wake up and the universal dog translator is for sale at walmart for $4.99

— Dave White, a mathematician, on the OpenAI IMO gold medal

Quoting ICML 2025

Submitting a paper with a "hidden" prompt is scientific misconduct if that prompt is intended to obtain a favorable review from an LLM. The inclusion of such a prompt is an attempt to subvert the peer-review process. Although ICML 2025 reviewers are forbidden from using LLMs to produce their reviews of paper submissions, this fact does not excuse the attempted subversion. (For an analogous example, consider that an author who tries to bribe a reviewer for a favorable review is engaging in misconduct even though the reviewer is not supposed to accept bribes.) Note that this use of hidden prompts is distinct from those intended to detect if LLMs are being used by reviewers; the latter is an acceptable use of hidden prompts.

— ICML 2025, Statement about subversive hidden LLM prompts

Tags: ai-ethics, prompt-injection, generative-ai, ai, llms

Qwen3-Coder: Agentic Coding in the World

Qwen/Qwen3-235B-A22B-Instruct-2507

Subliminal Learning: Language Models Transmit Behavioral Traits via Hidden Signals in Data

Our contribution to a global environmental standard for AI

Gemini 2.5 Flash-Lite is now stable and generally available

Textual v4.0.0: The Streaming Release

tidwall/pogocache

Advanced version of Gemini with Deep Think officially achieves gold-medal standard at the International Mathematical Olympiad

Quoting Daniel Litt

Coding with LLMs in the summer of 2025 (an update)

Quoting Armin Ronacher

Every day someone becomes a programmer because they figured out how to make ChatGPT build something. Lucky for us: in many of those cases the AI picks Python. We should treat this as an opportunity and anticipate an expansion in the kinds of people who might want to attend a Python conference. Yet many of these new programmers are not even aware that programming communities and conferences exist. It’s in the Python community’s interest to find ways to pull them in.

Tags: pycon, ai, llms, vibe-coding, ai-assisted-programming, python, generative-ai, armin-ronacher

Quoting Tim Sweeney

There’s a bigger opportunity in computer science and programming (academically conveyed or self-taught) now than ever before, by far, in my opinion. The move to AI is like replacing shovels with bulldozers. Every business will benefit from this and they’ll need people to do it.

— Tim Sweeney, Epic Games

Tags: ai-assisted-programming, careers, ai

OpenAI's gold medal performance on the International Math Olympiad

New tags

A few months I added a tool to my blog for bulk-applying tags to old content. It works as an extension to my existing search interface, letting me run searches and then quickly apply a tag to relevant results.

Since adding this I've been much more aggressive in categorizing my older content, including adding new tags when I spot an interesting trend that warrants its own page.

Today I added system-prompts and applied it to 41 existing posts that talk about system prompts for LLM systems, including a bunch that directly quote system prompts that have been deliberately published or leaked.

Other tags I've added recently include press-quotes for times I've been quoted in the press, agent-definitions for my ongoing collection of different ways people define "agents" and paper-review for posts where I review an academic paper.

Quoting Steve Yegge

So one of my favorite things to do is give my coding agents more and more permissions and freedom, just to see how far I can push their productivity without going too far off the rails. It's a delicate balance. I haven't given them direct access to my bank account yet. But I did give one access to my Google Cloud production instances and systems. And it promptly wiped a production database password and locked my network. [...]

The thing is, autonomous coding agents are extremely powerful tools that can easily go down very wrong paths. Running them with permission checks disabled is dangerous and stupid, and you should only do it if you are willing to take dangerous and stupid risks with your code and/or production systems.

Tags: vibe-coding, steve-yegge, generative-ai, ai-agents, ai, llms

Quoting Paul Kedrosky

One analyst recently speculated (via Ed Conard) that, based on Nvidia's latest datacenter sales figures, AI capex may be ~2% of US GDP in 2025, given a standard multiplier. [...]

Capital expenditures on AI data centers is likely around 20% of the peak spending on railroads, as a percentage of GDP, and it is still rising quickly. [...]

Regardless of what one thinks about the merits of AI or explosive datacenter expansion, the scale and pace of capital deployment into a rapidly depreciating technology is remarkable. These are not railroads—we aren’t building century-long infrastructure. AI datacenters are short-lived, asset-intensive facilities riding declining-cost technology curves, requiring frequent hardware replacement to preserve margins.

— Paul Kedrosky, Honey, AI Capex is Eating the Economy

Tags: ai-ethics, economics, ai, paul-kedrosky

How to run an LLM on your laptop

Vibe scraping and vibe coding a schedule app for Open Sauce 2025 entirely on my phone

Quoting Terence Eden

The modern workforce shouldn't be flinging copies to each other. A copy is outdated the moment it is downloaded. A copy has no protection against illicit reading. A copy can never be revoked.

Data shouldn't live in a file on a laptop. It shouldn't be a single file on a network share. Data is a living beast. Data needs to live in a database - not an Excel file. Access should be granted for each according to their needs.

— Terence Eden, We've got to stop sending files to each other

Tags: terence-eden, files

Voxtral

common-pile/caselaw_access_project

common-pile/caselaw_access_project

Enormous openly licensed (I believe this is almost all public domain) training dataset of US legal cases:This dataset contains 6.7 million cases from the Caselaw Access Project and Court Listener. The Caselaw Access Project consists of nearly 40 million pages of U.S. federal and state court decisions and judges’ opinions from the last 365 years. In addition, Court Listener adds over 900 thousand cases scraped from 479 courts.

It's distributed as gzipped newline-delimited JSON.

This was gathered as part of the Common Pile and used as part of the training dataset for the Comma family of LLMs.

Via @enricoshippole

Tags: law, ai, generative-ai, llms, training-data

Fell in a hole, got out.

Shipping WebGPU on Windows in Firefox 141

Shipping WebGPU on Windows in Firefox 141

WebGPU is coming to Mac and Linux soon as well:Although Firefox 141 enables WebGPU only on Windows, we plan to ship WebGPU on Mac and Linux in the coming months, and finally on Android.

From this article I learned that it's already available in Firefox Nightly:

Note that WebGPU has been available in Firefox Nightly on all platforms other than Android for quite some time.

I tried the most recent Nightly on my Mac and now the Github Issue Generator running locally w/ SmolLM2 & WebGPU demo (previously) works! Firefox stable gives me an error message saying "Error: WebGPU is not supported in your current environment, but it is necessary to run the WebLLM engine."

The Firefox implementation is based on wgpu, an open source Rust WebGPU library.

Via Hacker News

Documenting what you're willing to support (and not)

Documenting what you're willing to support (and not)

Devious company culture hack from Rachel Kroll:At some point, I realized that if I wrote a wiki page and documented the things that we were willing to support, I could wait about six months and then it would be like it had always been there. Enough people went through the revolving doors of that place such that six months' worth of employee turnover was sufficient to make it look like a whole other company. All I had to do was write it, wait a bit, then start citing it when needed.

You can have an unreasonable amount of influence by being the person who writes stuff down.

Via Hacker News

Tags: documentation, rachel-kroll