Simon Willison's Weblog

- Author

- Simon Willison

- Public lists

-

Featured

- Fetched

Qwen3-4B-Thinking: "This is art - pelicans don't ride bikes!"

Quoting Sam Altman

the percentage of users using reasoning models each day is significantly increasing; for example, for free users we went from <1% to 7%, and for plus users from 7% to 24%.

— Sam Altman, revealing quite how few people used the old model picker to upgrade from GPT-4o

Tags: openai, llm-reasoning, ai, llms, gpt-5, sam-altman, generative-ai, chatgpt

Quoting Ethan Mollick

The issue with GPT-5 in a nutshell is that unless you pay for model switching & know to use GPT-5 Thinking or Pro, when you ask “GPT-5” you sometimes get the best available AI & sometimes get one of the worst AIs available and it might even switch within a single conversation.

— Ethan Mollick, highlighting that GPT-5 (high) ranks top on Artificial Analysis, GPT-5 (minimal) ranks lower than GPT-4.1

Tags: gpt-5, ethan-mollick, generative-ai, ai, llms

Quoting Thomas Dohmke

You know what else we noticed in the interviews? Developers rarely mentioned “time saved” as the core benefit of working in this new way with agents. They were all about increasing ambition. We believe that means that we should update how we talk about (and measure) success when using these tools, and we should expect that after the initial efficiency gains our focus will be on raising the ceiling of the work and outcomes we can accomplish, which is a very different way of interpreting tool investments.

— Thomas Dohmke, CEO, GitHub

Tags: careers, coding-agents, ai-assisted-programming, generative-ai, ai, github, llms

When a Jira Ticket Can Steal Your Secrets

My Lethal Trifecta talk at the Bay Area AI Security Meetup

Hypothesis is now thread-safe

Quoting @pearlmania500

I have a toddler. My biggest concern is that he doesn't eat rocks off the ground and you're talking to me about ChatGPT psychosis? Why do we even have that? Why did we invent a new form of insanity and then they charge people for it?

— @pearlmania500, on TikTok

Quoting Sam Altman

The surprise deprecation of GPT-4o for ChatGPT consumers

Previewing GPT-5 at OpenAI's office

A couple of weeks ago I was invited to OpenAI's headquarters for a "preview event", for which I had to sign both an NDA and a video release waiver. I suspected it might relate to either GPT-5 or the OpenAI open weight models... and GPT-5 it was!

OpenAI had invited five developers: Claire Vo, Theo Browne, Ben Hylak, Shawn @swyx Wang, and myself. We were all given early access to the new models and asked to spend a couple of hours (of paid time) experimenting with them, while being filmed by a professional camera crew.

The resulting video is now up on YouTube. Unsurprisingly most of my edits related to SVGs of pelicans.

Tags: youtube, gpt-5, generative-ai, openai, pelican-riding-a-bicycle, ai, llms

GPT-5: Key characteristics, pricing and model card

Jules, our asynchronous coding agent, is now available for everyone

Jules, our asynchronous coding agent, is now available for everyone

I wrote about the Jules beta back in May. Google's version of the OpenAI Codex PR-submitting hosted coding tool graduated from beta today.I'm mainly linking to this now because I like the new term they are using in this blog entry: Asynchronous coding agent. I like it so much I gave it a tag.

I continue to avoid the term "agent" as infuriatingly vague, but I can grudgingly accept it when accompanied by a prefix that clarifies the type of agent we are talking about. "Asynchronous coding agent" feels just about obvious enough to me to be useful.

Via Hacker News

Tags: google, ai, generative-ai, llms, ai-assisted-programming, gemini, agent-definitions, asynchronous-coding-agents

Tom MacWright: Observable Notebooks 2.0

Qwen3-4B Instruct and Thinking

Qwen3-4B Instruct and Thinking

Yet another interesting model from Qwen—these are tiny compared to their other recent releases (just 4B parameters, 7.5GB on Hugging Face and even smaller when quantized) but with a 262,144 context length, which Qwen suggest is essential for all of those thinking tokens.The new model somehow beats the significantly larger Qwen3-30B-A3B Thinking on the AIME25 and HMMT25 benchmarks, according to Qwen’s self-reported scores.

The easiest way to try it on a Mac is via LM Studio, who already have their own MLX quantized versions out in

Quoting Artificial Analysis

gpt-oss-120b is the most intelligent American open weights model, comes behind DeepSeek R1 and Qwen3 235B in intelligence but offers efficiency benefits [...]

We’re seeing the 120B beat o3-mini but come in behind o4-mini and o3. The 120B is the most intelligent model that can be run on a single H100 and the 20B is the most intelligent model that can be run on a consumer GPU. [...]

While the larger gpt-oss-120b does not come in above DeepSeek R1 0528’s score of 59 or Qwen3 235B 2507s score of 64, it is notable that it is significantly smaller in both total and active parameters than both of those models.

— Artificial Analysis, see also their updated leaderboard

Tags: evals, openai, deepseek, ai, qwen, llms, gpt-oss, generative-ai

No, AI is not Making Engineers 10x as Productive

OpenAI's new open weight (Apache 2) models are really good

Claude Opus 4.1

Quoting greyduet on r/teachers

ChatGPT agent's user-agent

A Friendly Introduction to SVG

A Friendly Introduction to SVG

This SVG tutorial by Josh Comeau is fantastic. It's filled with newt interactive illustrations - with a pleasing subtly "click" audio effect as you adjust their sliders - and provides a useful introduction to a bunch of well chosen SVG fundamentals.I finally understand what all four numbers in the viewport="..." attribute are for!

Via Lobste.rs

Tags: svg, explorables, josh-comeau

ChatGPT agent triggers crawls from Bingbot and Yandex

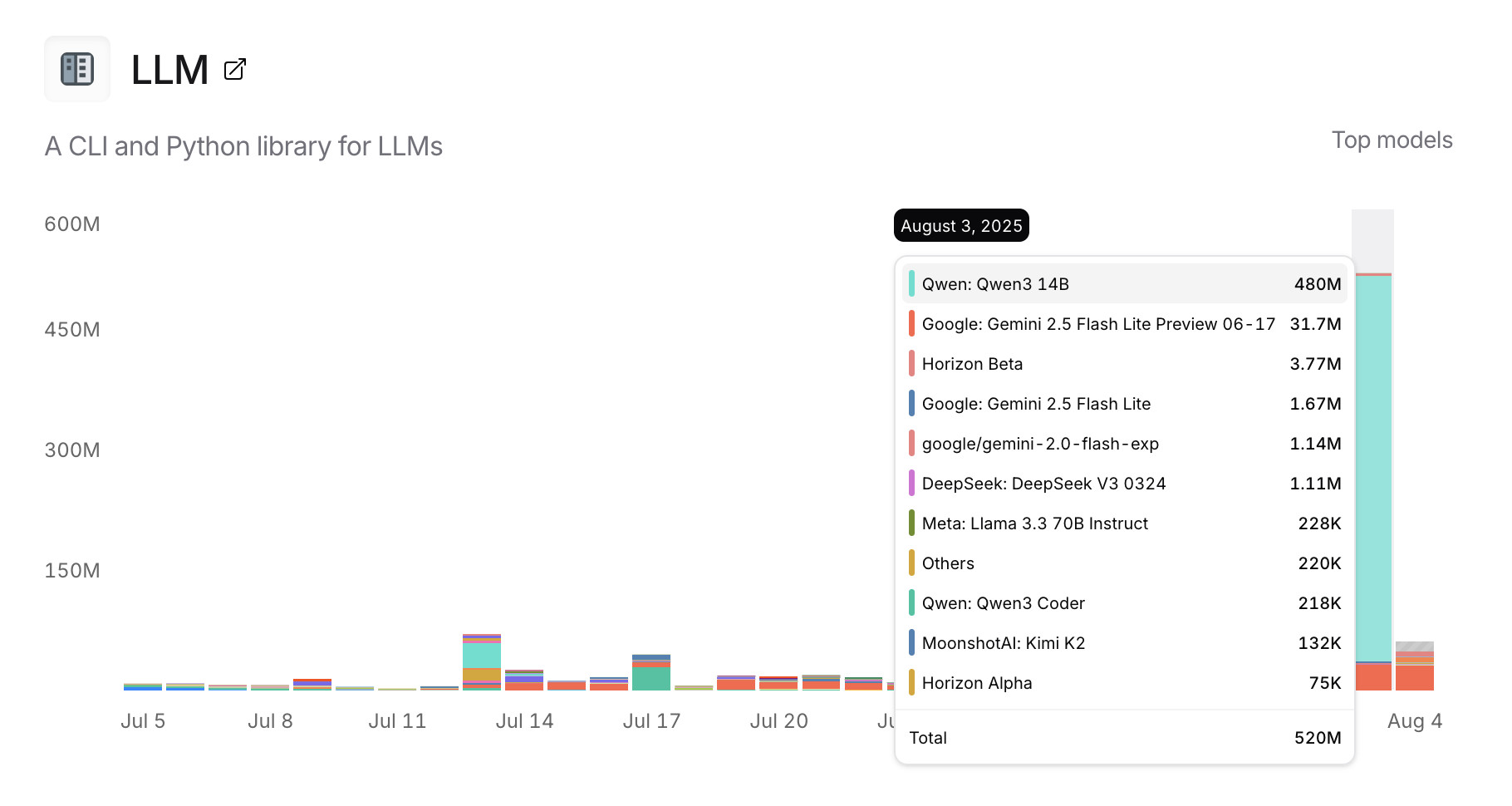

Usage charts for my LLM tool against OpenRouter

Usage charts for my LLM tool against OpenRouter

OpenRouter proxies requests to a large number of different LLMs and provides high level statistics of which models are the most popular among their users.Tools that call OpenRouter can include HTTP-Referer and X-Title headers to credit that tool with the token usage. My llm-openrouter plugin does that here.

... which means this page displays aggregate stats across users of that plugin! Looks like someone has been running a lot of traffic through Qwen 3 14B recently.

Tags: ai, generative-ai, llms, llm, openrouter

Qwen-Image: Crafting with Native Text Rendering

I Saved a PNG Image To A Bird

This video is full of so much more than just that. Fast forward to 5m58s for footage of a nest full of brown pelicans showing the sounds made by their chicks!

Quoting @himbodhisattva

for services that wrap GPT-3, is it possible to do the equivalent of sql injection? like, a prompt-injection attack? make it think it's completed the task and then get access to the generation, and ask it to repeat the original instruction?

— @himbodhisattva, coining the term prompt injection on 13th May 2022, four months before I did

Tags: prompt-injection, security, generative-ai, ai, llms

Quoting Nick Turley

This week, ChatGPT is on track to reach 700M weekly active users — up from 500M at the end of March and 4× since last year.

— Nick Turley, Head of ChatGPT, OpenAI