Simon Willison's Weblog

- Author

- Simon Willison

- Public lists

-

Featured

- Fetched

Structured Context Engineering for File-Native Agentic Systems

AI Doesn’t Reduce Work—It Intensifies It

Kākāpō mug by Karen James

Quoting Thomas Ptacek

Vouch

Claude: Speed up responses with fast mode

Quoting David Crawshaw

I am having more fun programming than I ever have, because so many more of the programs I wish I could find the time to write actually exist. I wish I could share this joy with the people who are fearful about the changes agents are bringing. The fear itself I understand, I have fear more broadly about what the end-game is for intelligence on tap in our society. But in the limited domain of writing computer programs these tools have brought so much exploration and joy to my work.

— David Crawshaw, Eight more months of agents

Tags: coding-agents, ai-assisted-programming, generative-ai, ai, llms

How StrongDM's AI team build serious software without even looking at the code

Quoting Tom Dale

I don't know why this week became the tipping point, but nearly every software engineer I've talked to is experiencing some degree of mental health crisis.

[...] Many people assuming I meant job loss anxiety but that's just one presentation. I'm seeing near-manic episodes triggered by watching software shift from scarce to abundant. Compulsive behaviors around agent usage. Dissociative awe at the temporal compression of change. It's not fear necessarily just the cognitive overload from living in an inflection point.

— Tom Dale

Tags: ai-ethics, careers, coding-agents, generative-ai, ai, llms

Running Pydantic's Monty Rust sandboxed Python subset in WebAssembly

An Update on Heroku

Quoting Karel D'Oosterlinck

When I want to quickly implement a one-off experiment in a part of the codebase I am unfamiliar with, I get codex to do extensive due diligence. Codex explores relevant slack channels, reads related discussions, fetches experimental branches from those discussions, and cherry picks useful changes for my experiment. All of this gets summarized in an extensive set of notes, with links back to where each piece of information was found. Using these notes, codex wires the experiment and makes a bunch of hyperparameter decisions I couldn’t possibly make without much more effort.

— Karel D'Oosterlinck, I spent $10,000 to automate my research at OpenAI with Codex

Tags: codex-cli, coding-agents, ai-assisted-programming, generative-ai, openai, ai, llms

Mitchell Hashimoto: My AI Adoption Journey

Opus 4.6 and Codex 5.3

Spotlighting The World Factbook as We Bid a Fond Farewell

Voxtral transcribes at the speed of sound

Distributing Go binaries like sqlite-scanner through PyPI using go-to-wheel

Introducing Deno Sandbox

January sponsors-only newsletter is out

I just sent the January edition of my sponsors-only monthly newsletter. If you are a sponsor (or if you start a sponsorship now) you can access it here. In the newsletter for January:

- LLM predictions for 2026

- Coding agents get even more attention

- Clawdbot/Moltbot/OpenClaw went very viral

- Kakapo breeding season is off to a really strong start

- New options for sandboxes

- Web browsers are the "hello world" of coding agent swarms

- Sam Altman addressed the Jevons paradox for software engineering

- Model releases and miscellaneous extras

Here's a copy of the December newsletter as a preview of what you'll get. Pay $10/month to stay a month ahead of the free copy!

Tags: newsletter

Quoting Brandon Sanderson

Introducing the Codex app

A Social Network for A.I. Bots Only. No Humans Allowed.

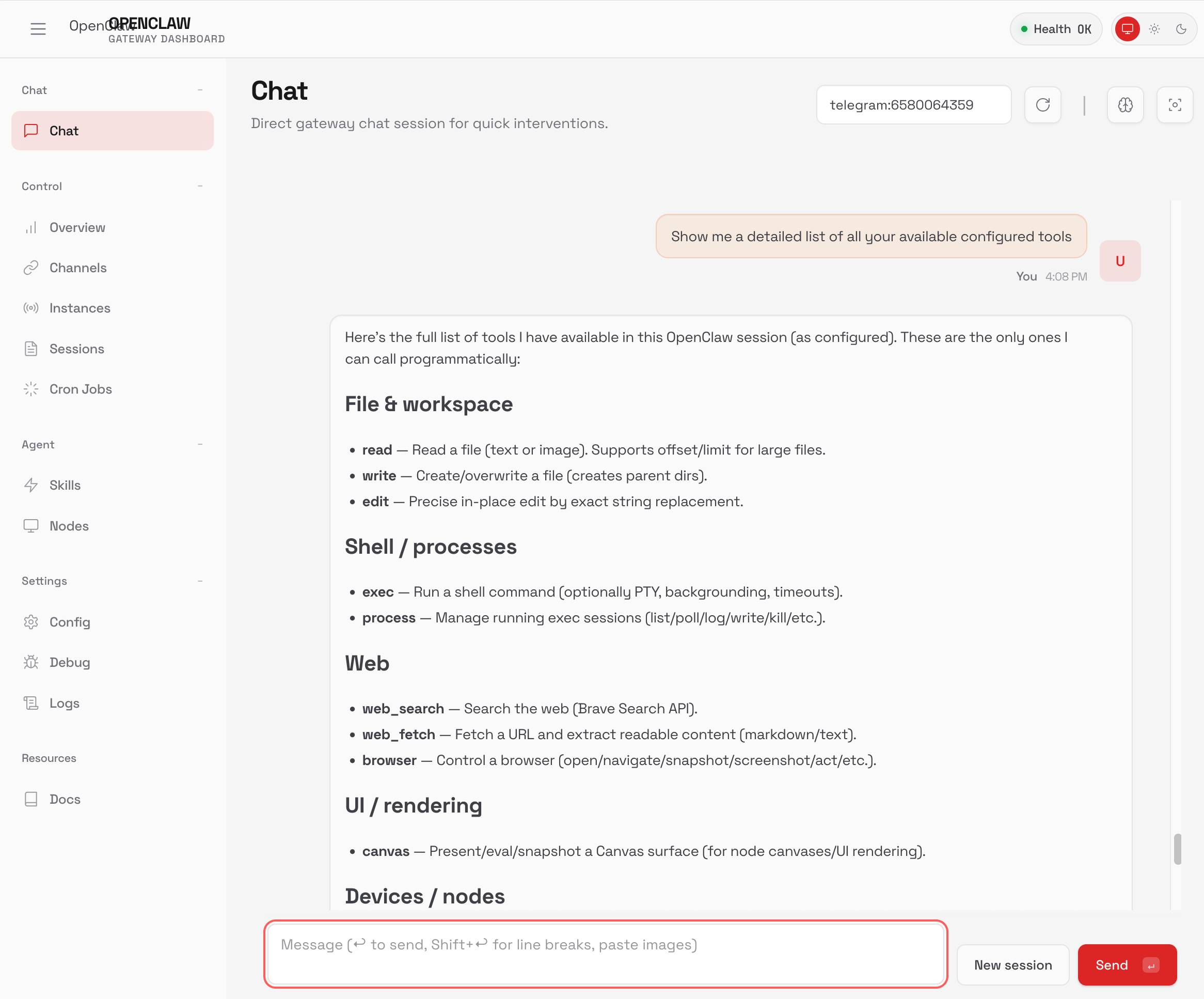

TIL: Running OpenClaw in Docker

TIL: Running OpenClaw in Docker

I've been running OpenClaw using Docker on my Mac. Here are the first in my ongoing notes on how I set that up and the commands I'm using to administer it.- Use their Docker Compose configuration

- Answering all of those questions

- Running administrative commands

- Setting up a Telegraph bot

- Accessing the web UI

- Running commands as root

Here's a screenshot of the web UI that this serves on localhost:

Tags: ai, docker, til, generative-ai, llms, ai-agents, openclaw

Quoting Andrej Karpathy

Originally in 2019, GPT-2 was trained by OpenAI on 32 TPU v3 chips for 168 hours (7 days), with $8/hour/TPUv3 back then, for a total cost of approx. $43K. It achieves 0.256525 CORE score, which is an ensemble metric introduced in the DCLM paper over 22 evaluations like ARC/MMLU/etc.

As of the last few improvements merged into nanochat (many of them originating in modded-nanogpt repo), I can now reach a higher CORE score in 3.04 hours (~$73) on a single 8XH100 node. This is a 600X cost reduction over 7 years, i.e. the cost to train GPT-2 is falling approximately 2.5X every year.

Tags: andrej-karpathy, gpt-2, generative-ai, ai, llms, openai

Singing the gospel of collective efficacy

Quoting Steve Yegge

Getting agents using Beads requires much less prompting, because Beads now has 4 months of “Desire Paths” design, which I’ve talked about before. Beads has evolved a very complex command-line interface, with 100+ subcommands, each with many sub-subcommands, aliases, alternate syntaxes, and other affordances.

The complicated Beads CLI isn’t for humans; it’s for agents. What I did was make their hallucinations real, over and over, by implementing whatever I saw the agents trying to do with Beads, until nearly every guess by an agent is now correct.

— Steve Yegge, Software Survival 3.0

Tags: steve-yegge, coding-agents, generative-ai, ai-agents, ai, llms